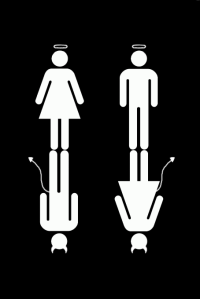

Evil Angels, Good Devils

I feel that killing is wrong. I believe that I ought to help the less fortunate. I should not take things which are not mine. It is not right to break my promises. It is bad to lie.

Why do I have the above moral values in me? It seems weird to ask this question, but it is often fundamental questions like these that get us stumped, stopped frozen in our tracks, and getting our tongues caught in a knot. The oft reply is simply “We just have it.”

Needless to say (but I’ll say it anyway), ethics and morals are a complicated area in the fields of science, philosophy, religion, and any other forms of human endeavour. It is not just a gray area, it is a gray area covered in immersing fog. Headways into the origins of morality only bring up more questions. The ethical issues arising from abortion, euthanasia, climate change, and national security that have been in debate for eons by the best and the brightest are still left in the troughs of inconclusiveness.

You might say morality is either nature (intrinsically gotten) or nurture (extrinsically given). We are either born with it or the environment we live in inculcated it in us. Well we are definitely not born with a ready set of moral values right? If we are born with it, then people around the world would share the same ethical philosophies and moral judgments. Clearly the world is divided in its moral underpinnings; the act of voluntary immolation by the spouse of a deceased husband signifying devotion and faithfulness (sati), the act of female genital circumcision as an act of purity and cleanliness, the act of mass genocide upon military combatants and innocent civilians alike, claiming just-war* as an act of freeing humanity from tyranny and oppression. Obviously, one man’s freedom fighting is another man’s act of terrorism.

So morality is a product of environmental influence then? Our families, friends, schools, religions and governments taught it to us? This reduces morality to just a set of codes and conventions to live by. It is right because people say it is right. It exists to serve the whole of humanity on a level of humaneness. If morality is simply a learned phenomenon, then anything could be moral. Killing could be moral if authorities taught it to be so. Well, this certainly explains how psychopathic murderers feel no wrong in doing what they do. But I personally do not subscribe to this. We feel something when a moral wrong is done. We emote when performing acts of ethical virtue. We are sanctioned by an inner conscience which directs us like a moral compass.

If it is neither nature nor nurture, then could it be because God said so? God directs us through a spiritual connection and through the Holy Scripture, giving us cognizance of a set of ethical rights and wrongs. This however, brings us to the Euthyphros Dilemma. Is something good because God says it is good? Or is something already good therefore God says it is good? One can of course ignore such questions and simply go on with life, justifying oneself with condolences such as “Living morally is not so hard, there is no need to complicate things”, or “I am a moral person already, I do not kill, steal, or do drugs. There is no need for me to think too much”.

Such explanations, however valid, are still not the same as justifications. Explaining simply provides a reason why you do what you do. Justifications on the other hand, proves that what you do can be legitimately defined as moral. Justifications provide a moral bearing to a claim.

Look at this example:

Pregnancy is a natural process. Therefore women should get pregnant.

We know the above conclusion is morally wrong, but why is it wrong? The reason is given, that pregnancy is natural. So why do we still disagree with it? It is because the statement is not justified. We usually don’t see it because we often assume it.

Now this is the sentence with the underlying justification stated:

Pregnancy is a natural process. Whatever is natural must be good. Therefore women should get pregnant.

Now we can see what we disagree on. We can’t disagree with “Pregnancy is a natural process,” because it is an undisputable fact. But we can disagree with “Whatever is natural must be good.” Reasons alone are not good enough. Underlying premises must be identified so that we can understand the justifications behind ethical and moral acts.

I am sure most people, such as myself, am well aware of ethical debates surrounding issues such as abortion, euthanasia, climate change, and national security. Therefore I will not go into those areas. Rather, the issue of anencephaly comes to mind. This is an issue that tears at the heartstrings of many, and is a moral dilemma that provides no answers, only acceptance.

“Anencephaly occurs when the “cephalic” or head end of the neural tube fails to close, resulting in the absence of a major portion of the brain, skull, and scalp. Infants with this disorder are born without a forebrain (the front part of the brain) and a cerebrum (the thinking and coordinating part of the brain). The remaining brain tissue is often exposed–not covered by bone or skin. A baby born with anencephaly is usually blind, deaf, unconscious, and unable to feel pain. Although some individuals with anencephaly may be born with a rudimentary brain stem, the lack of a functioning cerebrum permanently rules out the possibility of ever gaining consciousness. Reflex actions such as breathing and responses to sound or touch may occur.” – U.S. National Institute of Neurological Disorders and Stroke.

Why are there babies born like this? I don’t know. Besides the biological explanation (which lacks justification), there is no other explanation out there. All we can do is figure out what is the best option to undertake in a situation such as this.

A certain Baby K was diagnosed with anencephaly. This was found out during one of the pregnancy scans when she was just a neonate. There is the option for abortion, but the mother did not choose that option as it is morally wrong. It is the sanctity of life, and it must be left untouched. So the mother decided to give birth to this child, vowing to accept the condition of her baby no matter what it is. An unconditional love indeed. The baby was born, as doctors predicted, anencephalic. The only part of the brain the baby had was the brain stem, allowing the baby to have basic survival functions such as breathing and reflex actions. Some anencephalic babies are still birth, while the rest pass away within days. Baby K however, lived up to 2 and a half years old because her mother decided to artificially prolong the baby’s life. Baby K’s life was one lived in and out of emergency rooms and nursing homes. Having no consciousness to all the possible suffering was perhaps Baby K’s blessing. Baby K eventually left her mother’s side even though she fought so hard to reconcile the inevitable. So, was it the right thing to do for the mother to prolong the baby’s life? Or was it better to have just let nature run it’s course and let Baby K end her suffering earlier?

This case sparked a huge ethical debate within the medical community, as most anencephalic babies are only offered palliative care. Advanced life-support technology is never used as there is no known cure, and the prognosis entails the acceptance of invariable death. The debate basically came down to whether life should be treasured in any form it might be, or whether the misuse of medical equipment on an un-salvageable case should be deemed wrong. You can read the court ruling here.

Honestly, when I knew about the case, I just wept. It is a tragedy of life, and there is nothing we can do to solve it. Acceptance provides the only emotional and moral outlet.

So how can we rationally apply ethical principles to help justify what is the best moral option to undertake? The textbook method seem to be of no avail. Even from a personal standpoint, I find it near impossible to pick one course of action over another. On an appeal to feelings however (although logically fallacious), I would subjectively give the sanctity of life a benefit of a doubt and love life in all its forms. Death is a permanent status quo, and I would rather have the option to choose than not. Knowing exactly what to do will nevertheless remain a mystery to me until I have worn the shoes of ethical dilemma and walked a mile in it.

*Just-war is a theory on military ethics and sets conditions on which initiating a war is morally right and justified.

Filed under: Controversy, Education, Ethics, Health, Religion, Society | 2 Comments

Tags: anencephaly, Baby K, Ethics, justifications, morals, nature, nurture

Many might ask what in the world are the social sciences about. What does it do, how does it work, why do we do it, and what significance does it entail? Suffice to say, as much as we live in a natural world called the Universe, we also live in a social world called society. While the natural sciences of chemistry, biology, ecology, and physics study the natural world, the social sciences of psychology, anthropology, sociology, economics, politics, and history study the societal world.

Many might ask what in the world are the social sciences about. What does it do, how does it work, why do we do it, and what significance does it entail? Suffice to say, as much as we live in a natural world called the Universe, we also live in a social world called society. While the natural sciences of chemistry, biology, ecology, and physics study the natural world, the social sciences of psychology, anthropology, sociology, economics, politics, and history study the societal world.

In short, social science is the study of human behavior and human society. It grasps and wrestles with the intricacies that is human nature, deriving formulations based on the scientific methodology similarly used in the natural sciences. It’s impact is vast, and the application innumerable, ranging from the ethical values affecting government policies to the analysis of popular culture trends among youths. The application of the scientific method is thorough throughout the entire social science discourse.

Just as you would form a hypothesis such as “intensely minute quarks form the molecular clockwork of atoms” (natural science of physics), you would also form a hypothesis such as “the use of a particular language form the lens’ through which we perceive reality” (social science of linguistics). Also, just as how there are laws which are derivable from natural science, such as the laws of thermodynamics, you would also form laws derivable from social science, such as the law of scarcity. One difference between these two major sciences is in it’s output. The natural sciences often -but not always- produces either black of white results, whereas the social sciences often produces results in various shades of gray. Is is however, apt to keep in mind that all scientific laws are but vestiges, subject to change should new evidences emerge that offer new insight into a subject matter.

Key workings of the scientific method:

- Variables – These are the different things that can influence or be influenced by a particular state of being in something else. Variables can be anything. In the social sciences, variables are usually characteristics that differ among individuals and groups. Two or more variables combine in effect to form a hypothesis. E.g. Population growth (variable 1) decreases with education (variable 2).

- Hypothesis – These are tentative statements of relation between facts, and typically include the attempt to mesh at least two variables together into a cause and effect relationship. Hypothesis are meant to be tested scientifically so as to form theories or laws that allow consistent attribution. E.g. Being bullied often leads to exam failures due to lower self-esteem and confidence.

- Theory – These are sets of concepts at a fairly high level of generality. Theories are built upon hypotheses, and have gone through experimentation and observation to prove relatively high in predicting accurate outcomes. A hypothesis gains significance once it becomes a theory, and as such, provides a framework with which one can use to interpret further meanings from. E.g. Einstein’s Theory of Relativity, Rawl’s Theory of Justice and Darwin’s Theory of Evolution.

- Reliability – This refers to the consistency and repeatability of a research finding. All hypotheses must pass this test to account for it’s usefulness. Scientists usually perform retests after retests so as to ensure reliability. Proving internal consistency – the measure of extent to which tests gauge the same concept – also adds to reliability.

- Validity – This refers to the degree which the assumptions and research methods used reflect the underlying concepts meant to be in inquiry. There is validity if there is a relationship between the subject of study and the observed outcome, if the operational method of how concepts are related is based on actual causality, and if the research results have a fairly high level of generality in other scenarios as well.

- Induction – This is the process of building theory through accumulation of various inquiries. Observations are made first, followed by theorizing. E.g. Asian societies are predominantly collective in culture, therefore I theorize that…

- Deduction – This is the process of theorizing followed by inquiries to test the underlying logic. E.g. I theorize that violent video games promote violent behaviors in teens (followed by experiments and observations to prove the hypothesis).

Graphically, the methodology looks like this:

Social scientists use the above reasoning approach to both deduce and induce human behavioral patterns and truths that we often times take for granted without an inkling of consideration, such as the use of language, monogamous familial systems, currency trading, omnivorous diets, bipedal walking, popular culture etc.

The approach to scientific research can either be quantitative or qualitative. The quantitative approach seeks to establish a standard amount of a thing that is measurable while the qualitative approach refers to the collection of non-numeric data that provides descriptive explanations to account for social phenomenons. These translate into research methods such as surveys, polls, laboratory experiments, field observations, participant observations, and secondary source data collection.

Poignantly, it should be mentioned that science is fallible by nature of human descent. Theories and even scientific laws have been known to be proven false. Thus, although credible, the scientific method is not and was never meant as a solution to all problems. It simply serves as a utility and nothing more.

Filed under: Education, Mindsets, Reason, Society | Leave a Comment

Tags: science, scientific method, social

Born Into Symbols

What does your ethnicity and your nationality have in common? If you think about it, there is no similarity whatsoever. Being born in China does not necessarily make me a Chinese, and not all Caucasians are born in western countries. But one commonality ethnicity and nationality share is that both are symbolic in nature. They both represent something other than itself.

Just like language or words, ethnicity and nationhood are in essence mere symbols which we use to represent something else. By symbolic, it means that we use the word “dog” to mean the actual living animal. The word “dog” by itself carries no meaning if not for the symbolized. The dog phenomenon is labeled by us using the word “dog”, without which we would not be able to refer to it other than using an assortment of other linguistics or gestures. So how does ethnicity and nationality share that same symbolic vein? One need not look too deep to realize how.

Both ethnicity and nationality are myths. They are constructed out of nothing so as to symbolize something else.

We are all born somewhere on this earth, carrying with us the genes of our parents. Somehow, by being born on this particular “somewhere” we have come to adopt a certain nationality and take on a citizenship. And somehow, by carrying the genes of these two separate individuals, we have come to adopt a certain ethnic background, heritage and culture. Where was the choice in all this? None, but that is still acceptable because there is no downside to having no choices in itself. What is not acceptable however, is that ethnicity and nationality invariably carries all its peculiarities, stereotypes, tradition, attributions, meaning, and expectation along and places it upon us. It is like being born with an instruction manual detailing your function, purpose, role, and the appropriate behavior to follow.

But then again, our ethnic background and our national citizenship is just a construct to categorize each of us under a certain label. Tentatively, we are free to be whatever we want to be.

What if I was born on the Moon using mixed genes of one-fifth Chinese, one-fifth Caucasian, one-fifth African, one fifth-Javanese, and one-fifth Arabian? Does that make me a citizen of the Moon and carrying the ethnic background of all those different genealogies? No. The same applies if I was born as a Negro in America, as an Indian in Mongolia, as a Chinese in Armenia, or as an Arab in Japan. I am born an empty template to be filled as I go along.

Nation building and racial acculturation are both methods to instill the symbolized into the symbolic, to create meaning through referencing. There is nothing wrong with that, but then again, what exactly does a particular nationality or ethnicity mean or refer to? In language, I know that the word “car” means the metaphysical car. But what does being American mean? What does being French mean? There is nothing metaphysical to which the symbols symbolize. Just mere concepts and ideals. There is no single object I can point out to and declare “This is what defines an American” or “This is what defines being French”. Ethnicity and nationality are symbols to which there is no concrete phenomenon (landmass is arbitrary).People can only speak of the intangible when asked what their ethnicity and nationality mean. “Being American means having liberty and freedom!” and “Being French means having my rights and my voice heard!” for instance.

There is little wonder why young countries have such a difficult time forming an identity to instill into the populace. Not enough conditioning has taken place.

This symbolic construction used by nations and ethnic cultures, whether knowingly or unknowingly, can also be applied to every societal group; religions, clubs, companies, schools etc. So where does the symbolic stop, and the reality continue? Perhaps it is so intertwined we do not know anymore, or perhaps we just need to dig a little deeper.

Filed under: Communication, Religion, Society | 2 Comments

Tags: ethnicity, meaning, nationality, nationhood, Symbolic, Symbols

Twos A Crowd

It logically follows from the phrase “Only a fool will expect something different from doing the same thing,” that if I do something different I can expect a different result to manifest. Seems simple enough, but is suffice to say in actuality extremely difficult in execution, save the trivialities. I found it an impossible task to make practical use of that phrase. I know I wanted improvements to my life, but what can I do that is so different that I can get a totally different outcome? The problem is not that I didn’t want change (I do), but that I couldn’t know what I didn’t know I didn’t know (yes it is ‘didn’t know’ twice). Quickly, my ambitions to exercise my new found wisdom shifted from determination, to procrastination, and finally to neglect.

It logically follows from the phrase “Only a fool will expect something different from doing the same thing,” that if I do something different I can expect a different result to manifest. Seems simple enough, but is suffice to say in actuality extremely difficult in execution, save the trivialities. I found it an impossible task to make practical use of that phrase. I know I wanted improvements to my life, but what can I do that is so different that I can get a totally different outcome? The problem is not that I didn’t want change (I do), but that I couldn’t know what I didn’t know I didn’t know (yes it is ‘didn’t know’ twice). Quickly, my ambitions to exercise my new found wisdom shifted from determination, to procrastination, and finally to neglect.

The first time I heard the phrase “Only a fool will expect something different from doing the same thing,” I immediately pounced into agreement. Back then, it was such a revelatory statement for me that it seemed as if I had gained infinite wisdom. Now, even though it may still be a wise saying, it is nonetheless insufficient for me. Don’t get me wrong, I love that phrase to this day and hope to live by it every time. But it is just hard to follow up on that nugget of wisdom in a world that serves you dumbed-down conformity every chance it gets. It is like being physically blind your whole life and being told that there is such a thing as color. How can you ever know? You can’t. You can’t seek to know what you don’t know you don’t know. It is an impossibility.

However, it is possible to know what you don’t know you don’t know once you know what you don’t know. Simply put, you are only able to find out what is two steps ahead of you once you find out what is one step in front of you. Think about it for awhile.

It was only through knowledge of new things that I finally managed to exercise what I learned, to finally have the ability to appreciate what it truly means to do things differently. There was a need to reinvent the way I think, to cast away the old mindsets and learn anew. It was a humbling yet transcendent experience, and was the only way to see further in the realm of the cognitive.

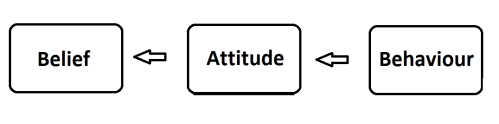

You see, there is a pattern to how cognition affects actual physical behavior, and it looks like this:

Your belief changes your attitude, and your attitude in turn changes your behavior. This is the normal flow of immaterial thoughts into tangible actions. Any psychologist can tell you this. For instance, you believe in animal rights, and thus you have an attitude of care and compassion towards animals. Your physical behavior then finally appears in forms like participating in animal rights groups. So where I stumbled was the last part, where my belief and attitude could not translate into a physical act. Or so I thought.

Where it really went wrong was not changing my attitude into a practicable behavior, nor was it changing my belief into a conscious attitude. It was changing my belief system itself.

For far too long many of us have been living in a box within a box, but we do not realize it. We are hopeful that once we look out of one layer of a box we become liberated in our thoughts. We learn something new and we go “Wow now I know!” Yet we are unconscious to the fact that we are in reality still confined, that there is still another level to transcend. That second box has a name, and it is called duality.

Whether consciously or subconsciously, it is ingrained in us to think in terms of binary opposites. There is good so there must be evil. There is peace and there is war. There is religion and there is atheism. The list goes on; majority vs. minority, black vs. white, west vs. east, pure vs. impure, women vs. men, heterosexual vs. homosexual, civilized vs. barbaric, science vs. art, logical vs. emotional, young vs. old, pretty vs. ugly, thin vs. fat, modern vs. traditional, rich vs. poor, normal vs. abnormal, fun vs. boring, trendy vs. outdated, famous vs. unknown, fast vs. slow, hot vs. cold, highbrow vs. lowbrow, individual vs. collective, stupid vs. smart, fresh vs. rotten, instant gratification vs. delayed enjoyment, good vs. bad, us vs. them, me vs. you, wrong vs. right, truth vs. lies.

This dichotomous thinking makes us derive meaning from its binary opposite, forcing us to be cognizant only in terms of absolutes. If it is not good then it must be bad. There seem to be no in-between area where it is neither good nor bad. The middle area is missing from our belief systems point of departure, and extremism of thought is the result. The fact is however, that there is always a gray area between the blackest black and the whitest white, whether or not we realize it.

How has this false dichotomy mange to permeate our essence of being so deeply that is has even become a given fact of life? Sadly, it is the institutions of western philosophy (in its broadest sense), mainly using the ubiquitous effect of mass media to transmit dualist ideology, that manged to naturalize dichotomous foundations of cognition throughout civilized industrial humanity. The worrying part is not that the media transmits such ideology, but that they themselves treat dualism as an affirmative, which translates itself into normative cultural structures via repeated cognitive inbreeding amongst the populace. In other words, common-sense knowledge as we know it, is only as common-sense as humanity treats it. It can be changed.

Straying away from binary thinking is possible once we take a step to know what we don’t yet know. Here are some examples of revolutionizing the belief systems of our world:

The Tata Nano: A car that costs just US$2000 to purchase, yet structurally sound and fully functioning. This allows families of third world countries that formerly depended on a single scooter to transport an entire family a newer and safer method to travel.

Kiva: A funding project that offers microloans to third world entrepreneurs using innovative new methods. This allows poverty stricken spectrum’s of society the opportunity to get out of the poverty cycle through their own hard work and not though receiving donations.

Cheap Prosthetic limbs: US$20 limbs that work. This gives every amputee, whether rich or poor, an opportunity to regain the ability to work, live and play properly again.

What assumptions do the above examples abolish from our old beliefs? Perhaps that of “the poor need my help.” The poor strata of society in our world have always been believed as people who need aid, charity, donations, assistance and help. Never as a people who are also individuals deserving the dignity of a full life. As it seems, the phrase “the poor” have been used as a blanket term to cover that side of humanity who are financially incapable. Unbeknown to us, individuality and persona have been lost through that mode of belief. Poor people no longer have a name, they are just a template figment in our imaginations. This is the byproduct of a dichotomous mindset birthed from a collage of us vs. them, rich vs. poor, first world vs. third world, and benefactor vs. beneficiary. How can the poor be truly liberated then, if our very point of departure is to treat them as an underprivileged class?

The world at large have however, been starting (albeit slowly) to adopt postmodernist views which majorly inculcates the faculty of knowledge. The assumption is that nowadays, people know what they are doing, watching, eating, studying etc. The people are now an informed one.

This old belief of duality defining the global frame of thought is fading. This is a case of pragmatism and not so much a case of mental foresight however, as can be seen from the motivating factors behind innovations based on a change in beliefs. It is a kind of mental prognosis to alleviate crisis’ rather than a true desire to educate oneself adequately so as to perceive phenomenons grounded in the metaphysical effectively. However, fact remains that it is only from a privileged postmodern standpoint from which the spark of innovation stem from. Knowledge, information, and data, is indeed the new soil from which newer knowledge, information, and data is birthed from. Not having the former is thus an underprivileged position to be in.

How do we even change our beliefs then? Is it even possible since it is so innate already? Yes it is possible, because of this:

We can shape our beliefs as much as our beliefs shape us. Modifying our behaviors and attitudes can slowly, but surely revise the way we think fundamentally. Long gone can be the days of false dichotomies permeating our societal world.

As you can tell by now, humility towards new modes of beliefs is crucial in the undertaking towards an un-boxed, postmodern mental inclination. Some people may say that we have to emulate the past in order to rectify our future, but only a fool will expect something different from doing the same thing.

Filed under: Controversy, Education, Mindsets, Motivation, Reason, Society | Leave a Comment

Tags: Beliefs, binary, dichotomy, duality, mindsets, opposites, postmodernism, psyche

I Will Read This Later

If you are like me (or any other person in this world actually), you might have come across or even experienced first hand what procrastination means. It is when you bookmark that web page for a read later, it is when you decide to watch that one last YouTube video before starting on your assignment, it is when you decide to leave the dishes in the sink planning to wash it later, and it is when you become so determined to go for a jog only to put it off to another day. “I promise I will do it later!” goes the old adage. This blog here by David McRaney gives wonderful insight into the bewildering world that is procrastination. Do have a read, and don’t say you will do it later. You know you won’t.

“..studies also show you don’t work better under pressure, and waiting for inspiration isn’t realistic. You get motivated after getting started, and you are worse at what you are trying to accomplish when pressed for time. Procrastination can’t be eliminated, only managed.” –excerpts from David McRaney’s blog comments.

Filed under: Education, Mindsets, Motivation | Leave a Comment

Tags: future, later, procrastination, wait

Time For A Change

Humanity has changed much since the middle ages. From the age of enlightenment to the age of revolutions and now into the modern era, we as a species have obviously advanced much in the area of technological science. That much is obvious. What has not kept up however, is the way we conceptualize ideals, the cosmology that directs us, the perceptions we use to mitigate our lives, and the knowledge we hold as truth. In other words, our thinking has not caught up with our doing, and the repercussions of our actions are about to bite us in the back before we realize what we have done.

Humanity has changed much since the middle ages. From the age of enlightenment to the age of revolutions and now into the modern era, we as a species have obviously advanced much in the area of technological science. That much is obvious. What has not kept up however, is the way we conceptualize ideals, the cosmology that directs us, the perceptions we use to mitigate our lives, and the knowledge we hold as truth. In other words, our thinking has not caught up with our doing, and the repercussions of our actions are about to bite us in the back before we realize what we have done.

See, we are born into this natural world whose existence have been largely independent from ours. The planets will always rotate around the sun, the sun will rise after each night, and the day will start all over again. No one can change this, and this is the expectation of reality we know of and live by. The truth is however, we are not independent from the natural world in which we live and breath.There exists an interdependence between all life, our planet, and the universe at large. We come into this world as takers, we nourish ourselves in the bosom of mother earth, and if we do not give back, there will soon be nothing left to take.

Bringing it down-to-earth, we expect water to flow from our taps as soon as we turn it on, we expect the nearest supermarket to be stocked up with food ready for purchase, and we expect our homes to be a place of sanctuary where we can be free from the problems of life. ‘Life’ as we know it however, is about to make a drastic change, for better or worse, whether we like it or not. We have always prided ourselves in our resourcefulness, and the material things of this world are mere resources we can use to benefit our lives with. We use, and we dispose. We consume, then we simply resume. There seems to be no downside to this resourceful mentality, and we just keep doing it, all for our own enjoyment and luxury. Nothing can be further from the truth.

Every shopkeeper knows the importance of taking a stock check at the end of every business day so that he knows what to replenish and what is running out. When was the last time we took a stock check of our earth? Here are some estimates. Precious metals such as silver and gold will run out in about 14 years. Copper, which is used widely as electrical wiring will run out in about 26 years. Tantalum, used to make our ubiquitous mobile phones will run out in about 28 years. Coal 70 years, natural gas 37 years, uranium 23 years. And of course, our all-important petroleum, a.k.a. black gold, will cease to provide as a fuel within 13 to 23 years. All of the natural resources mentioned above are non-renewable.

The problem is not the lack of resources, which is an invariable in the first place, but rather the worldview we hold as humanity. We have to start acknowledging a cyclical rather than a linear worldview, moving from a use-and-dispose fantasy to a use-and-reuse reality. Sustainability rather than consumption should be the modus operandi-the way we work. For far too long, unnecessary wastage have been occurring needlessly. Uranium have been used in weapon experimentation, arable land have been reallocated for luxury produce, perishable food gets left on store shelves, fresh water gets processed with vegetation to be made into alcohol, and oil gets left in idle engines and wasted on road trips. We have to start maximizing the use of each and every resource still available, clearing out industrial processes which cause wastage and redefine the lifestyles we currently embrace.

Humanity must seek to re-learn the truths. We have to change the way we think at a fundamental level and not just participate in environmental friendly activities. Also, the ideals of capitalism and a market economy must now be put to an end, as they are contrary to the possibility of a sustainable resource world. It is a tall order, but if we can start to see that the value in each and every human being is an inherently aesthetic one rather than an economic one, that each individual is precious in itself and not because of what each can produce, it is definitely a possibility, if not a necessity.

Filed under: Education, Environment, Health, Mindsets | Leave a Comment

Tags: earth, Environment, natural, planet, resources, sustainability, value, worldview

The Reason for Our Reasons

Another missing piece of the conversation puzzle. Sometimes people talk, quarrel, argue or debate, and positive results result from it. But oftentimes they do not. Perhaps sometimes it is about disregarding the truth behind our reasons and more about the appropriateness of it. This article from Malcolm Gladwell really gets you thinking about how we as people converse.

Filed under: Communication, Education, Mindsets, Reason | Leave a Comment

Tags: Communication, conversation, Reason

Creating Your Self

“Who am I?”, goes the age old question of self-identity. Someone may answer that he is Chinese in ethnicity, and another may answer that he is an artist by profession. Both are correct answers, yet neither gives a complete picture. The list goes on forever if you truly wanted to state everything that you are. Are you able to list some of yours?

“Who am I?”, goes the age old question of self-identity. Someone may answer that he is Chinese in ethnicity, and another may answer that he is an artist by profession. Both are correct answers, yet neither gives a complete picture. The list goes on forever if you truly wanted to state everything that you are. Are you able to list some of yours?

For me, I can say that I am a male by birth, an Asian by descent, a student by vocation, a writer by interest, a Christian by religious affiliation, a gamer, guitar player, friend, boyfriend, Singaporean, a night person, cleanliness freak, a truth-seeker, meat-eater, chocolate-lover, an undergraduate, easterner, son, grandson and many many more. All of these identities each form a part of me, yet each individual one plays a disintegrated part on its own. I am not just a sum of all my identities put together, I am a multiple of it. For everyone of us, by virtue of the fact that we are all capable of creating new ideas and concepts, are more than able to create our own selves.

For example, you may be born a female, but that does not mean you must be feminine. An Asian is not necessarily short, an African-American is not necessarily good at sports, and a child does not necessarily know less than an adult. Racial, age. ethnic, and gender stereotypes only serves as an easy-to-remember categorizing and profiling of each group, and does not equate to the complete truth. This simple notion underlines the basis that we are all an ever changing, ever transient being.

There is no “true-self” or an identity that you are born with that can’t be changed (although people tend to keep their identities constant and are resistant to change). A person born into a poor family has a chance to become rich, and an ace student can fail the very next day. Even gender or racial boundaries can be blurred, such as Singaporeans identifying themselves more with western culture than with their Asian roots, and with the advent of gender changing procedures becoming more commonplace, there are even half-male, half-female genders around. But race, age and gender are ascribed identities that most people accept and live with comfortably. More importantly, are identities that are inscribed upon oneself, such as social status, educational level or even weight-range profiles.

With the right amount of motivation, skills and socialization, you can change your personal traits or attributes you deem not beneficial, and take on new ones. I know of Asians who speak with such perfect accents (and with the help of dyed hair) that I cannot differentiate between them and an ethnic British. Westerners have also been known to change their identity to take on new ones, such as when Ted Kaczynski, an American mathematician, deciding to engage in terrorist acts against his own race and country, inflicting damage onto the social group he previously identified himself with.

Although this leads one to think that we are all masters of our own self, it is sadly not the entire case. I can’t choose to be whoever and whatever I want to be, I am restricted by my social identity. As much as I want to be superman, I am not. Not because I can’t fly or because I am not Clarke Kent, but because everyone else thinks I am not. You decide to a certain extent who you want to be, but much of it rests in the hands of the people around you. People decide who you are, and you in turn decide who they are.

Take a moment now and think of all the different social groups you belong to. How often have you identified your sense of self with the groups you engage in? Maybe you have proudly proclaimed that you play a clarinet in a symphonic orchestra, or that you have voted for the republicans in the previous elections. Maybe you have affiliated yourself with a certain religion, recreational sport, hobby, rights union, nationality, or species. Every one of us belong to a grouping one way or another. Even if you are anti-institutional, you still belong to a group. Be it a gang or a hippie cult, every group brings with it a certain culture to follow in order to identify oneself with it.

Case in point: The group makes you as much as you make the group. Not going too deep into theories of self-identities, part of who we are and how we think is determined by a collective identity. By belonging to a social category and by interacting with other people, you begin to form your self through a series of affirmations, associations, refinements and context salience. If people continually comment on how good you dress, you will most likely become proud and confident of your dress sense. Kind of a self-fulfilling prophecy laid down by others onto your life. Similarly, if people always know you as “the tech geek”, somehow or other (whether you really are or not) you will become just as they said.

However, the situation also needs to be taken into account. You will unlikely identify yourself as a military expert in front of army generals, but in the presence of your own friends, you are known as such. If you are a policeman for example, you might also find it unwise to mention your uncle’s criminal record to your colleagues. The context we are in plays a huge part as a determining factor whether we want to be known as such and such.

Adapting to life’s ebb and flows are part of what forms your sense of identity, and many a times, those ebb and flows are the people and groups around you, pushing and pulling that not-yet-kneaded dough which is your identity, into shape.

Filed under: Mindsets, Motivation, Reason, Society | 2 Comments

Tags: age, am, create, ethnicity, gender, I, identity, me, race, religion, self, self-identity

How We All Stopped Growing

People grow (read grow-up) when there is a need to. A baby learns to talk so as to communicate with his/her caretakers, an adolescent puts in hours of studying to achieve better grades, and a teenager matures into the roles of adulthood in order to put food on the table for his family. This is all part and parcel of life. However, have you noticed a staggering delay in the age people achieve these few important stages in life compared to just a decade ago?

People grow (read grow-up) when there is a need to. A baby learns to talk so as to communicate with his/her caretakers, an adolescent puts in hours of studying to achieve better grades, and a teenager matures into the roles of adulthood in order to put food on the table for his family. This is all part and parcel of life. However, have you noticed a staggering delay in the age people achieve these few important stages in life compared to just a decade ago?

Parents perhaps complain about how their kids are not as sensible compared to other kids his age. Is this a nature vs. nurture problem? Or could there be other factors involved?

Read this and settle into your own conclusion.

Filed under: Controversy, Education, Society | 1 Comment

Tags: age, development, grow, Growth, learning